CS 344 and CS 386: Artificial Intelligence

(Spring 2018)

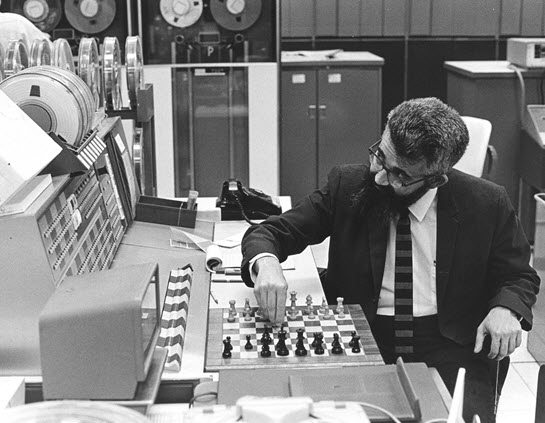

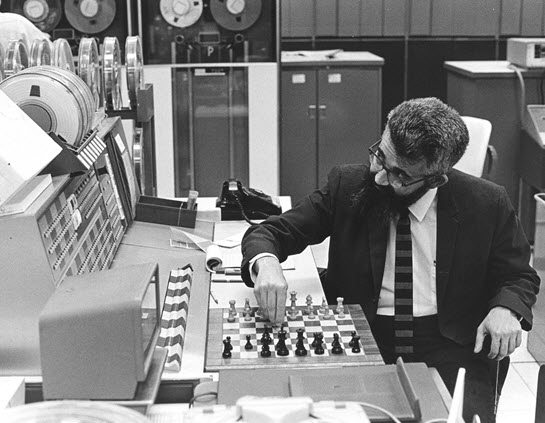

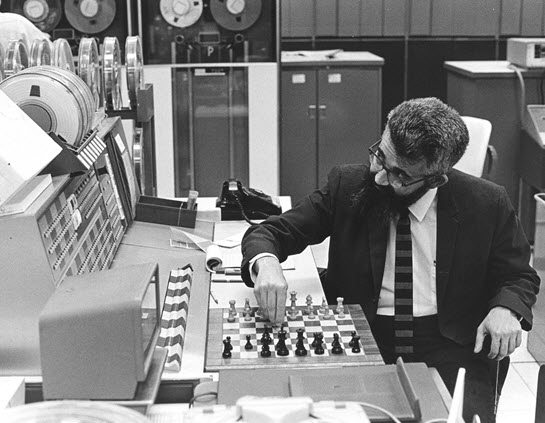

(Picture source: http://amturing.acm.org/images/mccarthy-2.jpg.)

This page serves as the primary resource for CS 344 (Artificial Intelligence) and CS 386 (Artificial Intelligence Lab).

Instructor

Shivaram Kalyanakrishnan

Office: Room 220, New CSE Building

Phone: 7704

E-mail: shivaram@cse.iitb.ac.in

Teaching Assistants

Pragy Agarwal (E-mail: 163050017@iitb.ac.in).

Abhilash Panicker (E-mail: 163050016@iitb.ac.in).

Akshay Arora (E-mail: 173050021@iitb.ac.in).

Avinash Modi (E-mail: 173050017@iitb.ac.in).

Sagar Ashokrao Tikore (E-mail: 173050048@iitb.ac.in).

Bhavani Vishal Babu (E-mail: 140050049@iitb.ac.in).

Sohum Dhar (E-mail: 140070001@iitb.ac.in).

Naru Divakar Reddy (E-mail: 140050044@iitb.ac.in).

Chinthakindi Sai Chetan (E-mail: 140050066@iitb.ac.in).

Class Meetings

Lectures will be held in 103, New CSE Building, in Slot 6:

11.05 a.m. – 12.30 a.m. Wednesdays and

Fridays.

Lab sessions will be held in Software Lab 2, New CSE Building,

during Slot L4: 2.00 p.m. – 4.55 p.m. Fridays.

The instructor's office hours will immediately follow class

lectures and labs. Meetings can also be arranged by appointment.

Course Description

Artificial Intelligence (AI) surrounds us today: in phones that

respond to voice commands, programs that beat humans at Chess and Go,

robots that assist surgeries, vehicles that drive in urban traffic,

and systems that recommend products to customers on e-commerce

platforms. This course aims to familiarise students with the breadth

of modern AI, to impart an understanding of the dramatic surge of AI

in the last decade, and to foster an appreciation for the distinctive

role that AI can play in shaping the future of our society.

The course will provide a historical perspective of the field of AI

and discuss of its foundations in search, knowledge representation and

reasoning, and machine learning. A small selection of specialised

topics will also be taken up; these could include, for example, speech

and natural language processing, robotics, crowdsourcing, computer

vision, and multiagent systems. The theory and lab components will

proceed in step to equip students with the knowledge and skills to

design and apply solutions based on AI.

Students interested in gaining more depth are encouraged to follow

this basic course with advanced ones on topics such as machine

learning, information retrieval and data mining, sequential decision

making, robotics, speech and natural language processing, computer

vision, and game theory.

Eligibility

CS 344 and CS 386 are core courses in the CSE undergraduate

programme. They can only be taken by CSE B.Tech. students in their

third (or higher) year.

Evaluation

CS 344 will have four class tests (each 15 marks), a mid-semester

examination (20 marks), and an end-semester examination (35

marks). The best four scores out of the class tests and the

mid-semester examination will contribute 65 marks towards the final

grade; the end-semester examination will contribute 35 marks towards

the final grade.

Grades for CS 386 will be decided based on 8–10 lab

assignments, each worth 10–15 marks.

Academic Honesty

Students are expected to adhere to the highest standards of

integrity and academic honesty. Acts such as copying in the

examinations and sharing code for the lab assignments will be dealt

with strictly, in accordance with the

institute's procedures

and disciplinary

actions for academic malpractice.

Texts and References

Artificial Intelligence: A

Modern Approach, Stuart J. Russell and Peter Norvig, 3rd edition,

Pearson, 2010.

The

Elements of Statistical Learning, Trevor Hastie, Robert

Tibshirani, and Jerome Friedman, 2nd edition, Springer,

2009.

Communication

This page will serve as the primary source of information regarding

CS 344 and CS 386, their schedules, and related announcements. The

Moodle pages for these courses will be used for sharing additional

resources for the lectures and assignments, and also for recording

grades.

E-mail is the best means of communicating with the instructor;

students must send e-mail with "[CS344]" in the header, with a copy

marked to the TAs.

Class Schedule

-

January 5: Welcome; Introduction to the course; AI: past,

present, and future.

Reading: Chapter 1, Russell and Norvig

(2010); Slides.

-

January 10: Search.

Reading: Chapter 3, Russell and Norvig (2010).

Summary: Illustrative search problems; Search problem

instances; Search tree and template for graph search algorithms.

-

January 12: Search.

Reading: Pieter Abbeel's illustration of A* search.

Summary: BFS, UCS, DFS; Heuristics and A* search.

-

January 17: Search.

Reading: Sections 5.1, 5.2, Russell and Norvig (2010).

Summary: Admissibility and consistency; Optimality of A* search; Game trees.

-

January 19: Search.

Reading: Sections 5.3, 5.4, Russell and Norvig

(2010); Pieter

Abbeel's illustration of alpha-beta pruning.

Summary: Minimax search; Alpha-beta pruning; Evaluation functions; Lookup tables; Forward pruning.

-

January 24: Search.

Reading: Section 4.1, Russell and Norvig (2010).

Summary: Chance nodes and Expectiminimax algorithm; Incomplete information games; Traveling Salesperson Problem; Hill climbing.

-

January 31: Class Test 1; Search.

Summary: Genetic algorithms.

-

February 2: Planning.

Reading: Class Note 1.

Summary: Markov Decision Problems; Policies; Value functions.

-

February 7: Planning.

Summary: Bellman's Equations; Optimal policies and Optimal value function; Value Iteration algorithm.

-

February 9: Probabilistic reasoning.

Reading: Chapter 13, Russell and Norvig (2010).

Summary: Limitations of Boolean logic in representing knowledge;

Requirement of consistency of beliefs with the axioms of

probability; Random variables; Joint distributions;

Marginalisation; Conditional probabilities.

-

February 14: Probabilistic reasoning.

Summary: Bayes' rule; Independence; Conditional independence;

Introduction to Bayes Nets.

-

February 16: Class Test 2; Probabilistic reasoning.

Summary: Semantics of Bayes Nets; Computing joint probabilities.

-

February 21: Probabilistic reasoning.

Reading: Sections 14, 14.1, 14.2, 14.4, 14.4.1, Russell and Norvig (2010); Class Note 2.

Summary: Modeling joint probability distributions as Bayes Nets; Bayesian Inference.

-

February 23: Probabilistic reasoning.

Reading: Pieter Abbeel's lecture on conditional independence and D-separation.

Summary: Bayesian inference in Dynamic Bayes Nets; D-separation.

-

March 1: Mid-semester examination.

-

March 7: Probabilistic reasoning.

Reading: Sections 14.5.1, 15.5.3, Russell and Norvig (2010).

Summary: Random sampling; Sampling in Bayes Nets; Inexact inference; Overview of particle filtering.

-

March 9: Probabilistic reasoning.

Summary: Particle filtering.

-

March 14: Probabilistic reasoning; Learning.

Reading: Section 14.5.2, Russell and Norvig (2010).

Summary: Likelihood weighting; Gibbs Sampling; Learning to separate linearly separable points.

-

March 16: Learning.

Reading: Class Note 3.

Summary: Linear separability; Perceptron; Perceptron Learning Algorithm; Proof of convergence.

-

March 21: Learning.

Reading: Section 18.7, Russell and Norvig (2010).

Summary: Artificial neurons; Neural networks as parameterised functions.

-

March 23: Learning.

Summary: Calculation of error gradient; Backpropagation algorithm.

-

March 28: Class Test 3.

-

April 4: Learning.

Reading: Sections 18.1, 18.2, 18.4, 18.11, Russell and Norvig (2010); Wikipedia entry on Confusion matrix.

Summary: Parallelising backpropagation using vectors and matrices; Setting initial weights; Parameter-tuning and cross-validation; Classificiation and regression; Handling multiple classes; Confusion matrix.

-

April 6: Learning.

Reading: Class Note 4.

Summary: k-means clustering problem; k-means clustering algorithm; proof of convergence.

-

April 11: Learning.

Reading: Slides.

Summary: Reinforcement learning problem; Review of MDPs; Planning and learning; Q-learning algorithm; Approximating the Q function using neural networks; Applications.

-

April 11: Invited talk by Arjun Jain: Computer Vision.

-

April 18: Class Test 4; Learning

Reading: Is your Linked Open Data 5 Star? (ignore preceding sections on the page).

Summary: Popular exalted view of AI/ML versus simple techniques that often suffice; Relative importance of data over algorithms.

Reference: Scaling to Very Very Large Corpora for Natural Language Disambiguation.

-

April 20: Learning

Reading: Andrew Zisserman's note on logistic regression (ignore sections 2, 3, and 4); Section 18.3, Russell and Norvig (2010); Wikipedia page on Bagging.

Summary: Logistic regression, Decision trees, Bagging.

-

May 3: End-semester examination.

Lab Assignments and Schedule

Students are expected to complete each lab assignment within the

corresponding lab slot. Submissions must be uploaded to Moodle in

the format specified.

If a submission is not made by 5.00 p.m., a "carry over"

will be counted against the assignment. Assignments that are carried

over will only be evaluated after the student attends a session with a

TA or the instructor to explain their submission and demonstrate its

working. A special lab session will be announced to evaluate

carry-over assignments.

A student may carry over up to two lab assignments without any

penalty. A third carry-over will incur a penalty of 2 marks; a fourth

carry-over will incur a penalty of 4 marks; subsequent carry-overs

will incur a penalty of 6 marks.

Below is the schedule for lab assignments.